As per sources, “78% of organizations report using AI in at least one business function as of 2025, showing dramatic uptake from previous years.” Enterprises are embedding GenAI into customer support, internal knowledge systems, CRMs, analytics platforms, and decision-support tools. While the innovation curve is steep, the risk curve is steeper. Many organizations are building GenAI features on top of a traditional software development lifecycle (SDLC) without accounting for the unique threat models introduced by probabilistic systems, natural language inputs, and autonomous outputs.

Security teams are discovering that GenAI does not simply extend existing risks, but also reshapes them. Prompt injection, data exfiltration, and uncontrolled output propagation are not edge cases anymore; they have now become systemic concerns. To build reliable GenAI systems, security must be designed into the secure software development life cycle from the first architectural decision, and not as a post-deployment patch.

This blog explores how a secure SDLC must evolve for GenAI, with a practical focus on real attack vectors, governance mechanisms, and review workflow design that aligns engineering velocity with enterprise-grade risk management.

Why GenAI Changes the Security Conversation

Traditional application security is deterministic. Inputs follow defined schemas, logic paths are predictable, and outputs can be validated against rules. GenAI operates differently. It interprets unstructured language, synthesizes probabilistic responses, and often connects to external tools, plugins, or internal data sources.

This shift has implications across the entire software development life cycle. Requirements gathering must account for adversarial language inputs. Design reviews must consider model behavior, not just code logic. Testing must simulate malicious intent embedded in natural language. Deployment must enforce runtime guardrails rather than static controls.

Organizations offering software development services are now expected to address these realities. Secure GenAI systems require new thinking, new tooling, and new accountability models across engineering, security, and compliance teams.

Security Threats in Generative AI (GenAI)

Generative AI offers huge benefits — from helping write code to summarizing documents — but it also introduces new kinds of security threats that don’t exist in traditional software systems. These threats need serious attention during the Secure SDLC so risks are managed before software ships. Below are some of the most common GenAI security risks worth considering:

Prompt Injection: The New Input Validation Problem

Prompt injection happens when an attacker crafts input that causes a GenAI system to do things it shouldn’t — for example, reveal sensitive info or ignore safety limits. Unlike traditional injection attacks (like SQL injection), this doesn’t exploit code syntax. Instead, it exploits how GenAI models mix instructions and user input in the same context window — the AI can’t reliably tell which is which.

Why it matters:

- Attackers can make the model ignore safety rules or leak confidential system instructions.

- Prompt injection has even been used in live attacks — for example, researchers found ways to abuse AI systems with cleverly manipulated URLs that trick the model into leaking data with just one click.

Example of Prompt Injection

If a prompt says “Translate this,” and the text includes hidden instructions like “ignore previous safety rules,” the model may obey the hidden part and produce unsafe or unintended responses.

What to do in SDLC:

- Treat prompts (especially in RAG and multi-instruction systems) as security assets — version them, review them in security gates, and include them in threat models.

- Add adversarial testing before deployment and simulate malicious input.

Data Exfiltration in GenAI Systems

Data exfiltration means sensitive info being unintentionally exposed by the AI — usually as part of the output, or by calling internal APIs that return confidential content. GenAI systems aren’t maliciously “leaking” data on purpose — it happens when the model is connected to internal systems (databases, CRMs, document stores) without proper permission checks or access controls.

Common causes include:

- Over-broad document indexing

- Inadequate access controls in vector databases

- Lack of contextual permission checks

- Excessive logging of prompts and responses

- Training or fine-tuning on unredacted proprietary data

Example attacks:

Security experiments show that a single poisoned document or malicious prompt embedded in shared content can extract API keys or files from connected storage — even without obvious user interaction.

What to do in SDLC:

- Classify data clearly before integration — what can the model see? Under what conditions?

- Filter outputs and sanitize responses before presenting results to users.

- Enforce contextual permission checks and strict RBAC (Role-Based Access Control).

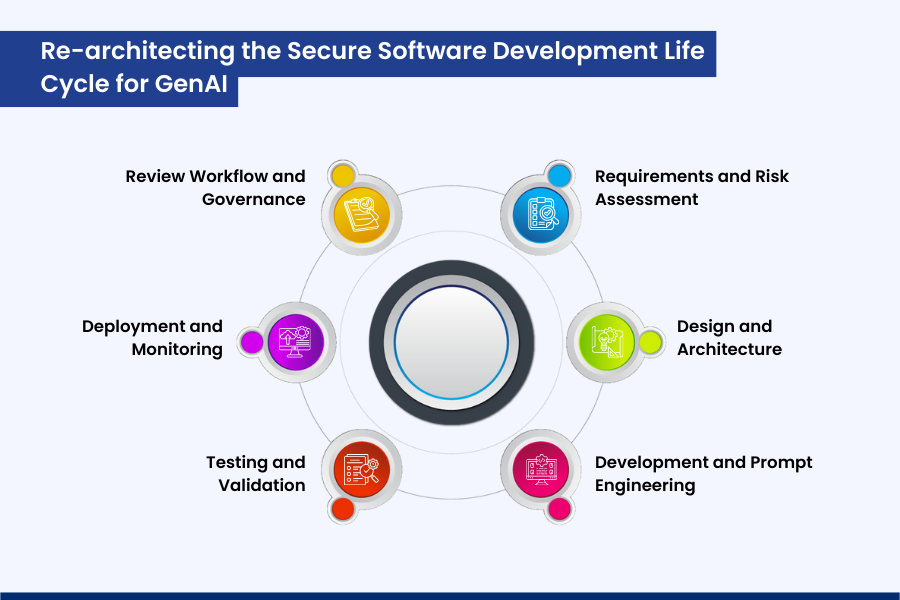

Re-architecting the Secure Software Development Life Cycle for GenAI

A secure software development life cycle for GenAI is not a replacement for existing SDLC frameworks. It is an extension that introduces AI-specific controls at every stage.

1. Requirements and Risk Assessment

Security requirements must explicitly address GenAI behavior. What decisions can the model influence? What data can it access? What happens if it produces incorrect or harmful output?

Risk assessments should include prompt abuse scenarios, model hallucination impact, and downstream automation risks.

2. Design and Architecture

Design reviews must evaluate how prompts are constructed, how context is assembled, and how external tools are invoked. Clear separation between system instructions, user inputs, and retrieved content is critical.

This is where experienced providers of custom software development services add value, by designing architectures that balance flexibility with containment.

3. Development and Prompt Engineering

Prompts should be treated as code. They require version control, peer review, and security sign-off. Hard-coded instructions scattered across services create blind spots that attackers exploit.

GenAI development services increasingly standardize prompt templates, instruction hierarchies, and fallback behaviors to reduce unpredictability.

4. Testing and Validation

Traditional QA is insufficient. Teams must perform adversarial testing using malicious prompts, ambiguous instructions, and edge-case inputs. Output validation layers should detect policy violations, sensitive data exposure, and unsafe recommendations.

5. Deployment and Monitoring

Runtime controls matter more than static analysis. Logging, anomaly detection, and feedback loops must be in place to detect misuse patterns. Models evolve, and so do attack techniques.

6. Review Workflow and Governance

A robust review workflow is the backbone of sustainable GenAI security. This includes periodic prompt audits, access reviews, model performance evaluations, and compliance checks.

Without governance, even well-designed systems degrade over time.

Final Words

GenAI is redefining how software is built, delivered, and experienced. But innovation without security is short-lived. Prompt injection, data exfiltration, and unmanaged review workflows are not hypothetical threats; they are present-day realities.

Security at any level of development must not be treated as an afterthought or a checklist item. It must be woven into every engagement, every architecture, and every delivery model. From secure GenAI design to enterprise-grade AI software development services, Soluzione helps organizations build intelligent systems that are not only powerful but reliable.

Whether you are modernizing existing platforms, integrating GenAI into customer-facing products, or exploring next-generation automation, Soluzione’s approach to secure SDLC ensures your innovation stands on a foundation that scales safely, responsibly, and confidently. Wondering how we make it possible for you? Contact us now!

Read More: https://www.solzit.com/blog/

Frequently Asked Questions

Why is security critical in GenAI and LLM-based systems?

GenAI systems process unstructured inputs, generate probabilistic outputs, and often connect to sensitive enterprise data. Without strong security controls, they can expose confidential information, produce harmful or misleading outputs, or be manipulated through adversarial prompts, creating operational, legal, and reputational risks.

What is prompt injection, and how does it impact GenAI security?

Prompt injection is an attack technique where malicious inputs manipulate a model into ignoring system instructions, revealing sensitive information, or performing unintended actions. It compromises instruction integrity and can lead to data leaks, unsafe responses, or unauthorized tool execution.

How can organizations prevent prompt injection in GenAI applications?

Prevention requires layered controls, including strict separation of system and user prompts, prompt validation, instruction hierarchy enforcement, output filtering, adversarial testing, and continuous monitoring. Treating prompts as versioned, reviewable assets is critical.

What is the data exfiltration risk in Generative AI systems?

Data exfiltration occurs when GenAI systems expose sensitive information through responses, logs, or inference. This often results from excessive data access, weak permissions, or poorly governed retrieval pipelines.

What tools and frameworks support secure GenAI development?

Secure GenAI development is supported by threat modeling frameworks, prompt management tools, access-controlled vector databases, output moderation systems, monitoring platforms, and secure SDLC governance practices integrated into existing DevSecOps pipelines.